Weapons of Mass Distraction

We have all experienced it, you're scrolling through social media and a post catches your eye, it makes a claim or shows you something that makes you say to yourself, "there is no way that's true," or it invokes an immediate emotional response and before you know it, you have begun typing a comment laced with vitriolic outrage.

But pause there for a moment. Before your fingers hit the keys, before you share it, before you screenshot it and send it to three friends who you know will be just as furious as you are.

Ask yourself: Why that post? Why right now? Why you?

Here is what most people don't realize in that moment, that reaction you just had? That flash of anger, that spike of fear, the intoxicating certainty that you have just witnessed something outrageous and undeniable? That may not have happened to you by accident, it may have been engineered.

Not in some vague, paranoid sense. Literally engineered. Designed, tested, refined, and delivered to your specific screen at a specific moment calculated to produce exactly the neurological and emotional response you just experienced, based on a detailed psychological profile assembled from thousands of your previous clicks, pauses, shares and reactions. A profile you never consented to build and you likely don't know exists.

Welcome to the 21st century's most consequential battlefield where there are no bombs or uniforms and no declarations of war. The weapons are algorithms, fabricated personas, deepfake videos and carefully crafted narratives seeded across platforms you use every single day, including this one. The casualties of this war are trust, a coherent reality and the capacity of ordinary people to make sense of the world around them.

This is “cognitive warfare” and unlike every other form of warfare in human history, it is being waged against you right now, not despite the fact that you're a civilian, but precisely because you are one.

The disturbing truth is that this is not a new idea, governments, intelligence agencies and powerful private actors have been systematically studying, theorizing and deploying influence operations against human minds for over a century. What has changed is the scale, precision and above all the technology which has now reached a point where mass psychological manipulation is no longer the exclusive province of nation-states with billion-dollar black budgets. It is available to anyone with a laptop, a social media account, and a basic understanding of how outrage spreads.

What follows is a comprehensive report on how we got here, tracing the lineage of cognitive and influence warfare from its earliest institutional roots, through the Cold War machine that embedded propaganda into the foundations of Western media, through the digital revolution that cracked open the human psyche to algorithmic exploitation, all the way to where we stand today, at the threshold of an era in which artificial intelligence and neurotechnology threaten to make the manipulation of human thought not merely scalable but effectively invisible.

The implications are civilizational and whether you like it or not, you are a part of this war.

Read carefully, because understanding this is itself, a form of defense.

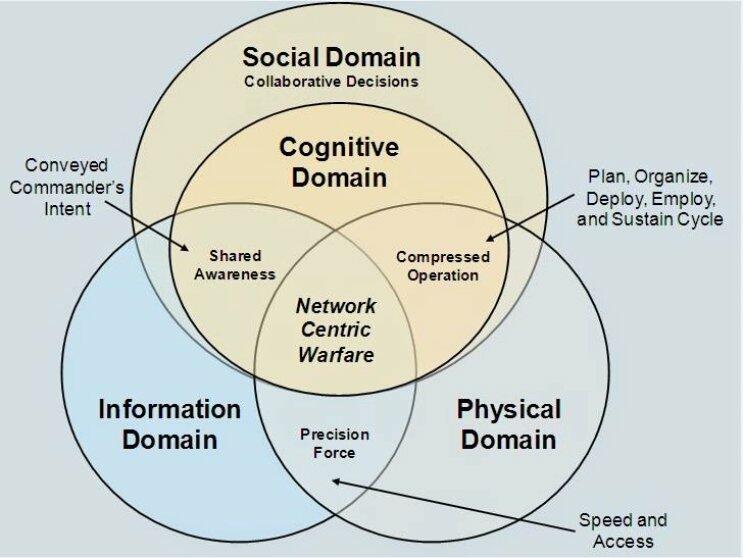

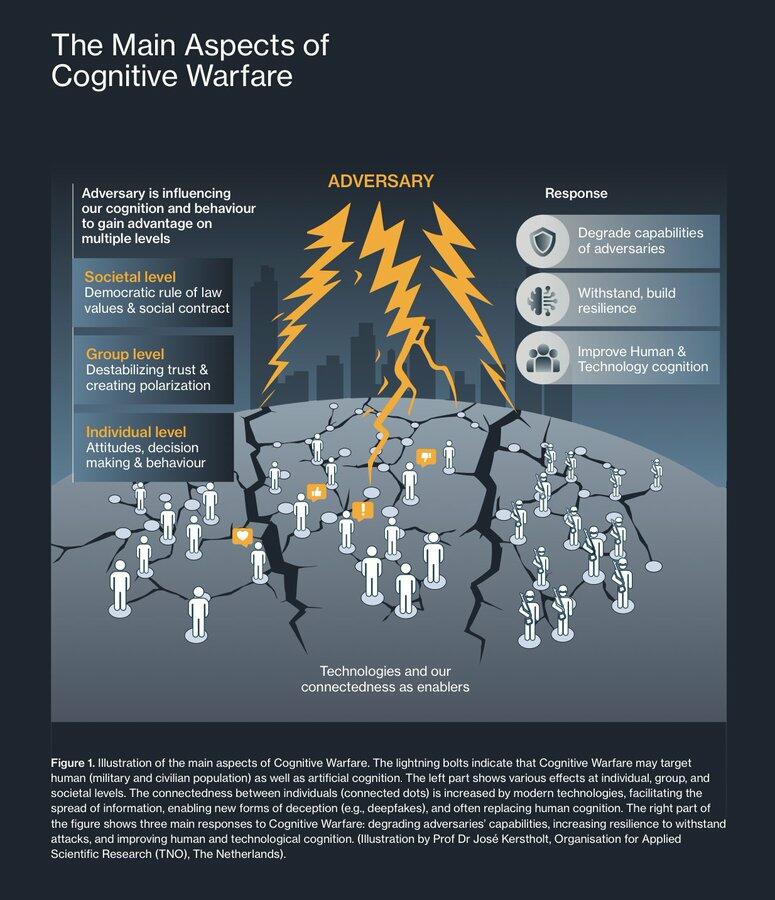

Cognitive warfare is the deliberate targeting of the human mind, belief formation, perception, decision-making and social trust as a primary theater of conflict. It is not merely propaganda rebranded, it is literally a doctrinal evolution that treats the brain itself as the ultimate battlespace, synthesizing psychological operations, neuroscience, big data, artificial intelligence, and digital infrastructure into a unified instrument of power that operates 24/7, across borders and largely below the threshold of declared war.

NATO formally defined it as “activities conducted in synchronization with other instruments of power, to affect attitudes and behavior by influencing, protecting, or disrupting individual and group cognition to gain advantage over an adversary."* Taiwan's National Defense University has noted the term "cognitive warfare" itself first appeared in a NATO report, describing it as an unconventional mode of warfare that exploits psychological biases and reflexive thinking through technological networks to "manipulate human cognition, induce changes in thought, and thereby cause negative impacts."

Ancient Deception to Industrial Propaganda

The impulse to win wars through the mind rather than the body is as old as warfare itself. Sun Tzu codified deception as the foundational principle of all military strategy; Alexander the Great and Genghis Khan both weaponized fear, spectacle, and fabricated reputation to collapse enemy morale before a single arrow was loosed. These were crude predecessors to what would eventually become sophisticated, institutionalized systems of mass psychological manipulation.

The modern era of psychological warfare was born in the crucible of the World Wars. The term “psychological warfare” itself migrated from Germany to the United States in 1941. During World War II, both Allied and Axis powers poured enormous resources into the discipline. The most architecturally significant Allied psyop was Operation Fortitude — the multi-layered deception campaign supporting D-Day — which conjured entire fictional armies, leaked fabricated order-of-battle documents, and convinced the Germans that Patton was leading the real invasion force to Pas-de-Calais rather than Normandy. On the organizational front, President Roosevelt established the Office of the Coordinator of Information (COI) under Colonel William Donovan, which was subsequently split into the Office of War Information (OWI) and the Office of Strategic Services (OSS) — the direct institutional ancestor of the CIA. The OSS’s Morale Operations Branch was explicitly tasked with attacking “the morale and the political unity of the enemy through psychological means.”

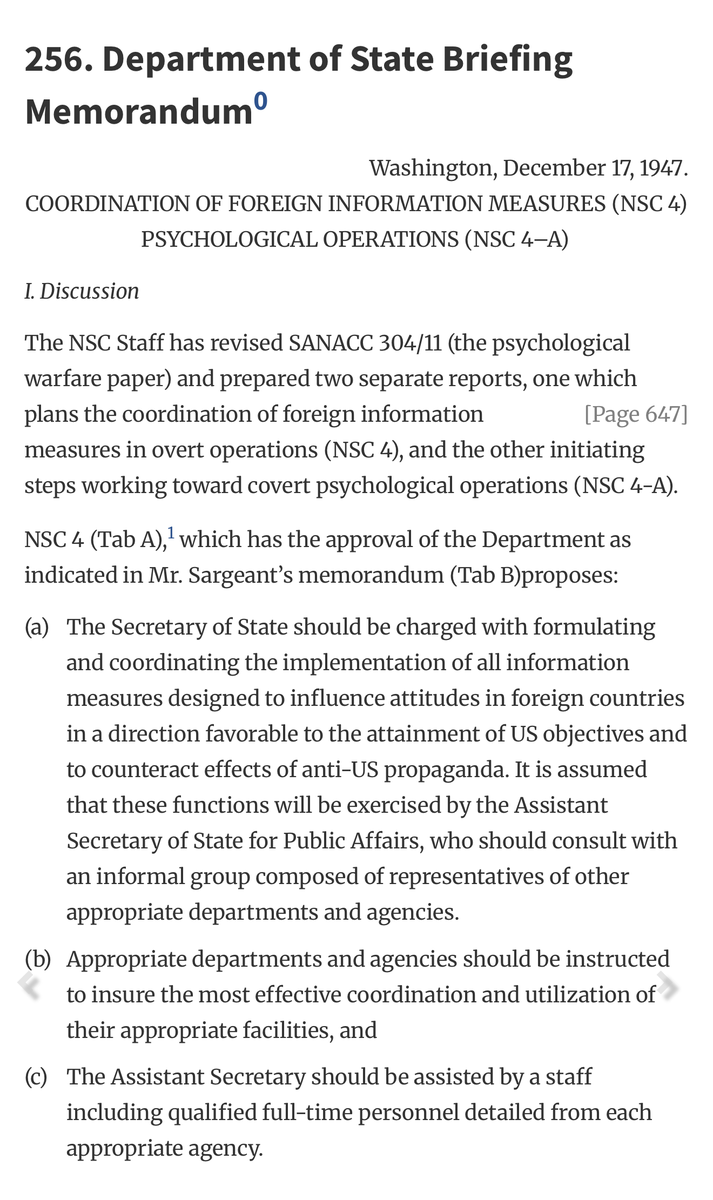

The Cold War Architecture: Building the Machine

The Cold War was the forge in which modern cognitive and influence warfare doctrine was hammered into shape. By 1947, U.S. policymakers, alarmed at the electoral strength of Communist parties in France and Italy, achieved bipartisan consensus that America had to beat the Soviet Union at its own psychological game, even if that meant, as one historian put it, using “subterfuge or state-run media or something stronger.” The first CIA document authorizing psychological operations, NSC 4-A, was so broad it didn’t even define the term — it simply authorized any activities designed to “counteract Soviet and Soviet-inspired activities.”

Operation Mockingbird and the Media Infiltration

What became known as “Operation Mockingbird” though the CIA never officially named it, began around 1948 under Frank Wisner, head of the Office of Policy Coordination, who was tasked with creating an apparatus for propaganda, economic warfare, preventive direct action including sabotage, anti-sabotage, demolition, subversion against hostile states. Wisner recruited Philip Graham of The Washington Post to run the program within the media industry. By the early 1950s, Wisner had cultivated assets inside The New York Times, Newsweek, CBS, Time, the Miami Herald, and dozens of other outlets. He called the apparatus his “Mighty Wurlitzer” an organ capable of playing any tune across any number of pipes simultaneously. CIA payments to embedded journalists ranged from $500 to $5,000 per planted story.

The operation was exposed in 1975 by the Church Committee congressional investigations, which revealed deep Agency connections with journalists and civic groups. In 1977, Carl Bernstein published a landmark Rolling Stone investigation documenting that more than 400 U.S. press members had secretly carried out assignments for the CIA, including figures at The New York Times, CBS, and Time Inc. The CIA’s Director William Colby confirmed to the Church Committee that the agency had used journalists as assets. Whether it ended or merely evolved remains a live question.

MK-ULTRA and the Science of Mind Control

Concurrent with the media infiltration, the CIA ran MK-ULTRA (1953–1973), a sprawling covert research program investigating whether human minds could be directly controlled through drugs (LSD, mescaline), hypnosis, sensory deprivation, psychological torture, and electroconvulsive therapy. MK-ULTRA involved at least 150 research programs across 80 institutions including universities, hospitals, and prisons, much of it conducted on unwitting subjects. The program represented the state’s first systematic attempt to move from influencing minds at scale to reprogramming individual minds directly. It was a dead end for direct control, but a rich source of data on psychological vulnerability and compliance.

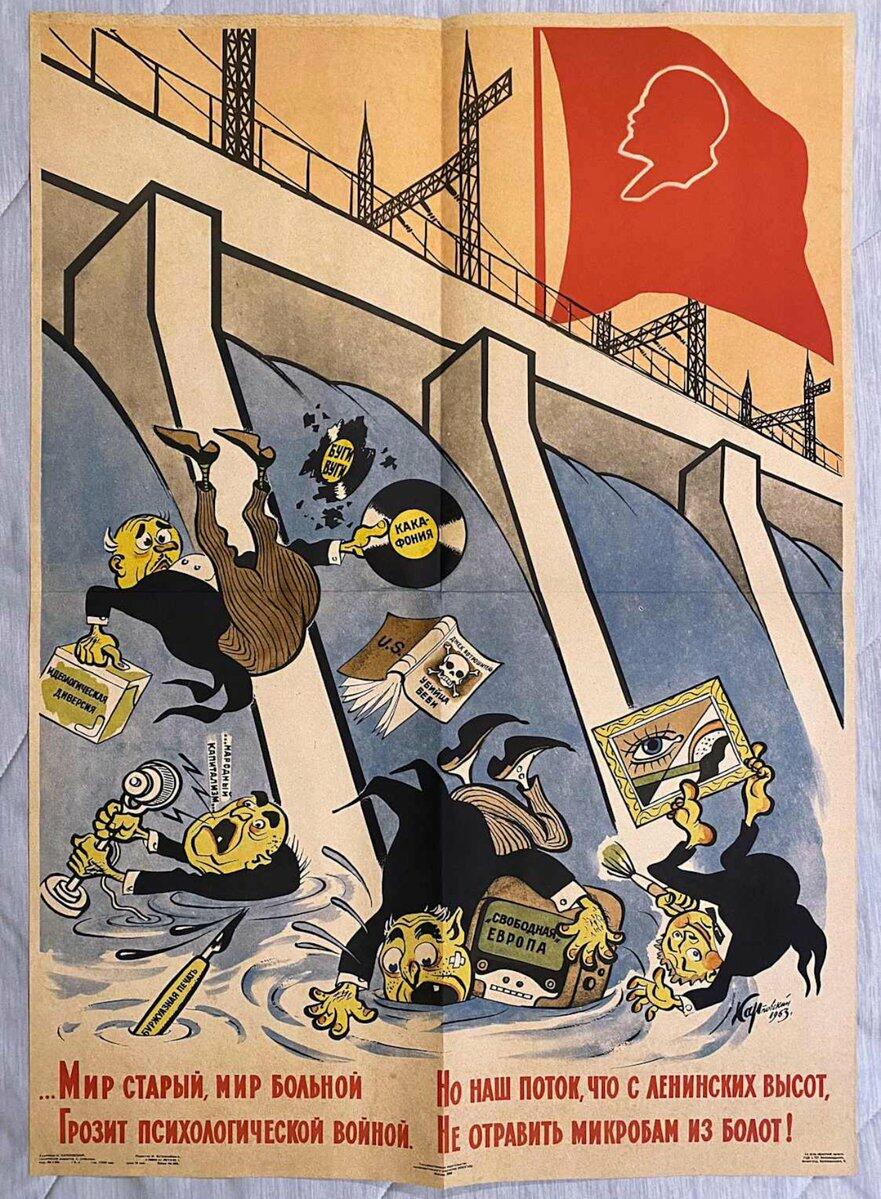

Soviet Reflexive Control

The Soviets built their parallel doctrine around a concept called reflexive control, derived from their old military principle of maskirovka (masking/deception). The core idea is surgically precise: feed an opponent carefully selected information so that the opponent voluntarily makes exactly the decision you want them to make, believing they arrived at it independently. This is not simple propaganda (pushing false content); it is architectural manipulation of the decision-making process itself. The concept was formally taught at Russian military schools, codified in national security doctrine, and has been studied continuously by Russian armed forces at both tactical and operational levels.

The Digital Pivot: Information Warfare as Doctrine

The 1991 Gulf War was the inflection point. The decisive U.S. victory, powered by precision guided munitions, networked command-and-control, and overwhelming intelligence advantage, triggered an intense period of theorizing about the relationship between information and warfare. Scholars used terms like “information-age warfare,” “cyber war,” “sixth-generation warfare,” and “revolution in military affairs” interchangeably but all agreed that technological advances had transformed information from a tool of warfare to its fuel.

By 1999, U.S. military doctrine formally defined Information Operations (IO) as “actions taken to affect adversary information and information systems while defending one’s own.” Information Warfare (IW) was a subset: IO conducted during crisis or conflict to achieve specific objectives. Crucially, IW was removed from official military doctrine in 2006, as the information operations community drifted toward preferring technical network solutions, cyber operations, computer network attacks, over the more “cognitive” elements like psychological operations and deception. This was a strategic mistake that adversaries would exploit.

In 2019, Lt. Gen. Stephen Fogarty announced his intention to transform U.S. Army Cyber Command into an information warfare command, arguing: “The power we are going to project globally is information.” By January 2026, the Army had formally established

an Information Warfare branch to develop officers trained in “military deception, influence, and combined arms maneuver, with a core emphasis on shaping perception and decision-making.”

The Russian School: Gerasimov and the Art of Chaos

In February 2013, Russian Chief of the General Staff General Valery Gerasimov published an article arguing that the rules of war had fundamentally changed, that non-military means of achieving political and strategic goals had come to exceed the power of conventional force. The Gerasimov Doctrine, as it came to be known in Western analysis, declares that “non-military tactics are not auxiliary to the use of force but the preferred way to win” and that chaos itself is the strategic objective inside an enemy state. The doctrine explicitly integrates Special Operations Forces, civilian media, proxy forces, and cyber capabilities to “influence all actors, disrupt communication, and destabilize regions.”

Russia demonstrated reflexive control in action in Ukraine in 2014: troops without insignia, simultaneous nuclear threats to NATO, coordinated denial of Russian involvement, all designed to paralyze enemy decision-making. The Internet Research Agency (IRA), founded in St. Petersburg and funded by Yevgeny Prigozhin (the mercenary oligarch behind the Wagner Group), was the digital arm of this doctrine. By 2015, the IRA had approximately 400 staff working 12-hour shifts, including 80 trolls focused exclusively on disrupting the U.S. political system. The IRA began targeting the United States as early as 2014, a fact confirmed in Robert Mueller’s 2018 grand jury indictment. Operatives were instructed to watch American TV shows, take grammar lessons, use proxy servers, and build fake American identities to create authentic-seeming social media accounts that accumulated real followers over years before being activated for influence operations.

The Chinese School: Cognitive Domain Operations

China’s People’s Liberation Army has developed what it calls Cognitive Domain Operations (CDO), the systematic targeting of human perception, trust, and decision-making to “win without fighting.” PLA strategists increasingly view the human brain as the decisive battlespace of the 21st century. Japan’s National Institute for Defense Studies (NIDS) documented how China blends cognitive warfare with cyber operations, economic coercion, and military intimidation in the “gray zone”, the space between peace and declared war. RAND and the National Defense University have tracked PLA exploration of neuroscience, brain science, AI, and data analytics specifically to enhance psychological warfare effects.

The PLA’s cognitive warfare categories include: military intimidation, bilateral cultural exchanges as influence vectors, religious manipulation, symbolic operations, and most recently, AI-generated deepfakes and algorithmically targeted content. The CCP’s goal, as the U.S. Army’s Mad Scientist Laboratory puts it, is to “blur fact and fiction, using algorithms to create adaptive realities”, not simply to lie, but to destroy the audience’s ability to distinguish truth from fabrication at all.

The NATO Response

NATO formally recognized cognitive warfare as a distinct operational domain in 2023. The Allied Command Transformation (ACT) published a Cognitive Warfare Exploratory Concept involving direct contributions from over 20 member nations, NATO commands, academia, and industry. NATO’s framework identifies two key threats driving the need for cognitive warfare doctrine: first, new technologies enabling adversaries to amass and manipulate data and exploit societal divisions; second, the proliferation of social media creating vectors for influencing thoughts, emotions, and actions at population scale.

The Alliance’s stated goal is a two-pronged capability: protecting NATO decision-making from adversarial manipulation while developing offensive cognitive effects against adversaries. As the January 2026 Small Wars Journal analysis of NATO’s Chief Scientist report put it: NATO “treats cognitive warfare as a fight for cognitive superiority, waged through synchronized military and non-military action across the continuum of competition… It does not hide behind jargon. It does not pretend this is only a messaging problem.”

The Private Sector Infrastructure

One of the most underreported dimensions of cognitive and influence warfare is how much of its operational machinery is built and deployed by private corporations — operating largely beyond democratic oversight, selling their services to state actors, corporations, political campaigns, and anyone else who can pay.

Cambridge Analytica

The most publicly documented case is Cambridge Analytica, the British data analytics firm that harvested data from approximately 87 million Facebook users through a privacy loophole involving a third-party personality test app created by Cambridge researcher Aleksandr Kogan. Cambridge Analytica’s weapon was the OCEAN psychographic model, an algorithm scoring users on Openness, Conscientiousness, Extraversion, Agreeableness, and Neuroticism, using Facebook likes and status updates as inputs.

The company built personality profiles for over 100 million registered U.S. voters and used these profiles to serve individually micro-targeted political advertising, different messages, designed for different psychological vulnerabilities, aimed at different people. It worked for both the Trump 2016 campaign and the Brexit Leave campaign. Whether the psychographic targeting was the decisive factor remains debated by researchers, but the architecture it revealed, mass psychological profiling + micro-targeted manipulation + harvested personal data was not in doubt.

Psy-Group and Black Cube

Israel’s intelligence-sector gray zone has spawned a cottage industry of private influence operations firms. Psy-Group (established 2014 by former Israeli military intelligence officers) operated under the corporate slogan: “Reality is a matter of perception.” Its marketing brochure offered services including “deep due diligence,” “targeting and monitoring,” and “honeypots and covert operations.” Psy-Group created entire fake-persona social media ecosystems, elaborate fake news websites disguised as legitimate news portals, fake influencers, coordinated narrative campaigns for corporate and political clients. It pitched its services to Trump campaign super-PACs in 2016, was investigated by Robert Mueller, and subsequently shut down under that legal pressure.

Black Cube (established 2010) specialized in human intelligence operations: recruiting former Mossad and Israeli military intelligence officers to conduct sting operations, gather compromising information, and plant defamatory media coverage against targets. Black Cube was most publicly exposed through the Harvey Weinstein affair (he hired it to surveil journalists and silence victims) and through Canadian court documents revealing its involvement with Psy-Group in a corporate espionage case. Terrogence, founded in 2004, was an earlier Israeli pioneer in systematically infiltrating internet forums using fake profiles.

These firms represent the privatized cutting edge of the influence operations industry: tradecraft developed inside state intelligence services, commercialized, and offered on the open market.

The U.S. Government’s Own Cognitive Apparatus

The U.S. has operated a vast and layered cognitive influence architecture, much of which targets both foreign and domestic audiences — often in legally ambiguous ways.

DARPA: Engineering the Mind

The Defense Advanced Research Projects Agency has invested billions in the neuroscience of cognition with military applications. The Next-Generation Nonsurgical Neurotechnology (N3) program aims to develop “high-performance, bi-directional brain-machine interfaces for able-bodied service members” — allowing soldiers to control unmanned vehicles and cyber defense systems with their thoughts, without surgery. The SCEPTER program examines how to augment human cognition directly. DARPA’s broader AI Next campaign invested over $2 billion to advance AI for national security, with roughly 70% of current DARPA programs now incorporating AI and machine learning.

IARPA: Weaponizing Cyberpsychology

The Intelligence Advanced Research Projects Activity (IARPA), established in 2007 as the intelligence community’s equivalent of DARPA, has explicitly turned its attention to the psychology of both attackers and defenders in the information environment. In 2024, IARPA launched ReSCIND (Reimagining Security with Cyberpsychology-Informed Network Defenses), a program designed to leverage cyber attackers’ cognitive vulnerabilities against them. The program manager stated the goal as making “an attacker’s job that much harder” by exploiting their inherent decision-making biases. IARPA’s separate MICrONS project seeks to reverse-engineer one cubic millimeter of human brain tissue to improve machine learning algorithms. IARPA has also issued requests for research on “methods and approaches for characterizing the cognitive effects in cyber operations,” explicitly drawing parallels between techniques “currently used in online advertising, political campaigning, e-commerce, and online gaming” and cyber attack vectors.

SOCOM and MISO: The Operational Layer

The U.S. Special Operations Command (SOCOM) is the designated joint proponent for Military Information Support Operations (MISO) what was formerly called PSYOP. SOCOM established the Joint MISO Web Operations Center (JMWC) in 2018 to support internet-based MISO campaigns run by combatant commands. MISO activities span “terrestrial and satellite television, radio, print products, text messages, social media and websites” targeting “foreign audiences” in regions from South America and the Middle East to Eastern Europe and sub-Saharan Africa.

In 2022, Facebook and Twitter removed nearly 230 accounts linked to covert U.S. military operations for violating platform policies on “platform manipulation and spam” and “coordinated inauthentic behavior.” The Stanford Internet Observatory and Graphika’s joint investigation, “Unheard Voice,” documented an interconnected web of accounts across Twitter, Facebook, Instagram, and five other platforms running pro-Western covert influence campaigns for approximately five years. One notable case involved a fake story about organ theft, designed to drive a wedge between Afghans and Iranians. The revelation prompted Undersecretary of Defense Colin Kahl to demand an urgent internal audit across all military branches — essentially acknowledging that the operations existed.

The GEC and the “Censorship Industrial Complex”

President Obama created the Global Engagement Center (GEC) in March 2016, originally to counter ISIS social media recruitment. Congress broadened its mandate in 2017 to include countering “foreign state and non-state propaganda and disinformation.” Over the following years, GEC funded organizations including the Global Disinformation Index (GDI), which published studies labeling conservative American outlets like Newsmax, OAN, and the New York Post as “high risk” for disinformation — drawing sharp Republican accusations that the GEC had become complicit in censoring Americans rather than foreign adversaries.

Separately, the Cybersecurity and Infrastructure Security Agency (CISA) expanded its mission from protecting critical infrastructure to monitoring domestic social media for “mis-, dis-, and malinformation” related to elections, COVID vaccines, and other subjects, establishing a “switchboarding” operation to route flagged posts from election officials directly to social media platforms for removal. A 2023 House Judiciary Committee report described CISA as the “nerve center of the federal government’s domestic surveillance and censorship operations on social media.”

The apparatus connecting these government entities to academic and civil society partners, Stanford Internet Observatory, Graphika, the Atlantic Council’s Digital Forensics Research Lab (DFRLab), University of Washington’s Center for an Informed Public, was what critics labeled the “censorship industrial complex.” By 2024, under sustained legal and congressional pressure, the Stanford Internet Observatory effectively collapsed — losing its founding director, its research manager, and most of its staff. In April 2025, Secretary of State Marco Rubio announced the complete closure of the GEC, accusing the office of being used to “actively silence and censor the voices of Americans they were supposed to be serving.”

The Technological Arsenal

Deepfakes and Synthetic Media

The February 2022 deepfake video of Ukrainian President Zelensky appearing to order his troops to surrender, crude by today’s standards, represented what researchers call “the opening salvo in a new era of information warfare.” By 2026, deepfakes have “crossed a critical threshold”, the technology has eliminated earlier telltale glitches and is now accessible to anyone with a smartphone.

In Ireland’s 2025 presidential election, a deepfake falsely depicted the eventual winner withdrawing his candidacy, complete with fake footage of national broadcasters confirming the news, deployed just days before polling. Russia’s CopyCop (Storm-1516) network has launched over 200 deceptive websites across multiple languages from Ukrainian to Swahili, using deepfakes, fabricated interviews, and AI-generated content to embed false narratives into information ecosystems globally.

Large language models now generate persuasive text indistinguishable from human writing across dozens of languages. Voice cloning requires mere seconds of audio. Video synthesis can produce photorealistic footage of events that never occurred. “Historical propaganda required significant resources,” producing bottlenecks. “Those constraints have now disappeared.” A single operator can now project the influence scale previously requiring entire government agencies.

Neuroweapons and Brain-Computer Interfaces

The most speculative but institutionally serious frontier of cognitive warfare is direct neurological manipulation. DARPA’s N3 program is actively developing brain-machine interfaces for combat applications. A University of Korea Army study demonstrated brain wave alteration using EEG and BCI technology in 2022. Researchers have identified military applications that can broadly be split into two categories: performance enhancement (cognitively linking soldiers with semi-autonomous systems) and performance degradation/weaponization (inducing confusion, fear, or compliance in enemy forces or civilian populations).

Dr. James Giordano of Georgetown University a neuroscientist who advises NATO and the U.S. military has stated plainly: “The brain is the 21st century battlescape.” Technologies now in development include “neurograins” microchips the size of salt crystals capable of recording and rewriting neurological activity as well as pharmaceuticals that can suppress fear responses in soldiers, and magnetically guided injectable computer devices capable of affecting specific brain regions.

The Havana Syndrome incidents in which U.S. diplomats in Cuba (2016), China, and dozens of other locations suffered neurological symptoms consistent with directed energy exposure, represent a possible real-world deployment of such technology, though attribution remains officially contested.

The Future Implications and the Coming Inflection

As of 2026, the core structural problem is that the cognitive battlespace moves at the speed of social media algorithms, while U.S. governance institutions were designed for the speed of bureaucratic process. A 2026 analysis in the Small Wars Journal states the problem bluntly: “In the time it takes Washington to schedule an interagency meeting, an adversary can frame an incident for half the world.” The recommended solution, centering cognitive warfare governance above the department level, directly within the National Security Council faces immediate civil liberties objections, since any system designed to respond to adversarial cognitive operations on the domestic information space risks becoming an instrument of domestic political control.

NATO’s 2026 cognitive warfare analysis, produced by the National Defense University, frames the stakes in terms that go beyond military competition. It describes the cognitive warfare threat environment as targeting “trust networks, identity narratives, and institutional legitimacy”, not just individual beliefs. When adversaries can erode the shared factual substrate that democratic deliberation requires, they do not need to win elections or military battles; they simply need to make self-governance impossible.

The most dangerous development may be what researchers call the liar’s dividend: because everyone now knows deepfakes exist, fabricated content does not just deceive, it provides a permanent alibi for true content. Real atrocities can be dismissed as deepfakes. Real evidence can be dismissed as AI. Just knowing deepfakes exist can make us doubt things we read and see even the truth.

We are seeing this phenomenon play out in real time with the ongoing war in Iran and it will only get worse as we retreat to our respective ideological bunkers. It offers perhaps the clearest illustration yet of cognitive warfare operating at full industrial scale in a live conflict.

From the opening hours of hostilities, the information environment fractured along lines that had nothing to do with geography and everything to do with pre-existing ideological identity. Depending on which feeds, accounts, and platforms a person inhabits, they are living inside entirely different versions of the same war. One audience sees precision strikes neutralizing an existential nuclear threat.

Another sees illegal aggression against a sovereign nation and mass civilian casualties. A third sees a manufactured crisis engineered to serve domestic political interests on multiple sides simultaneously. All three audiences believe they are watching the real war. All three are consuming content, some authentic, some manipulated, some entirely fabricated, that has been algorithmically served to them because it is precisely calibrated to confirm what they already believe and deepen the emotional investment they already have.

Casualty figures are contested in real time, with AI-generated imagery seeded into legitimate news streams before fact-checkers can respond. State actors on multiple sides are running coordinated inauthentic behavior campaigns across every major platform, not primarily to convince the enemy, but to shape domestic and allied audiences, to paralyze international response and to make the cost of taking a clear position feel too high for anyone sitting on the fence. The fog of war has always existed. What is new is that the fog is now being manufactured and distributed on demand by systems that can generate it faster than any human institution can dispel it.

And here is the part that should concern you most, the war in Iran will end, as all wars do. A ceasefire will be declared, or a front will stabilize, or exhaustion will enforce its own terms. But the cognitive infrastructure that is being road-tested on this conflict, the deepfake pipelines, the narrative seeding networks, the psychographic targeting systems, the AI-generated content farms operating in dozens of languages, none of that goes away. It gets refined and commercialized. It gets turned toward the next election, the next public health crisis, the next social fracture point, the next moment when a population is frightened and searching for an explanation.

That is the true danger to the world I hope this report has articulated. It is not that any single piece of disinformation will destroy a democracy, it is that the cumulative, compounding effect of living inside a permanently manipulated information environment that eventually destroys the one thing that no democracy can survive without: a shared capacity to reason together about what is real.

The war for your mind has already begun. Whether you are a combatant or a casualty is, to a meaningful degree, still your choice.

If you have made it this far I thank you and hope you will consider supporting my work by giving me a follow here on X and subscribing to my Substack WeTheFree.substack.com